Note

This C++ sample has a corresponding Python sample.

Spatter Tracking using C++

The Analytics API provides the Metavision::SpatterTrackerAlgorithm class for spatter tracking or tracking simple

non-colliding moving objects. This tracking algorithm is a lighter implementation of

Metavision::TrackingAlgorithm, and it suits only to non-colliding objects.

The sample metavision_spatter_tracking shows how to use Metavision::SpatterTrackerAlgorithm to track

spatters.

The source code of this sample can be found in

<install-prefix>/share/metavision/sdk/analytics/cpp_samples/metavision_spatter_tracking

when installing Metavision SDK from installer or packages. For other deployment methods, check the page

Path of Samples.

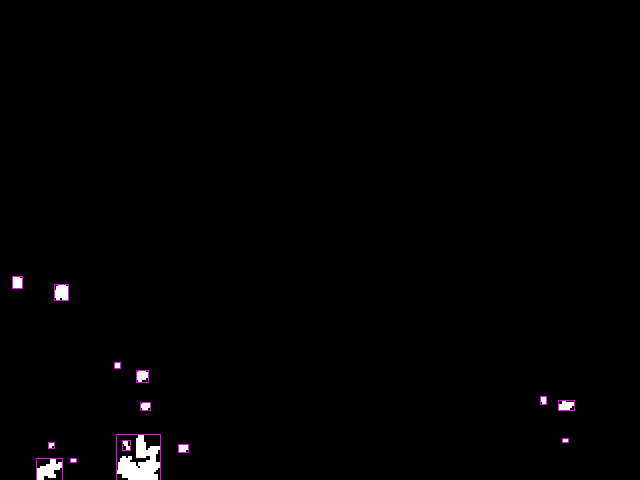

Expected Output

Metavision Spatter Tracking sample visualizes all events and the tracked objects by showing a bounding box around each tracked object with an ID of the tracked object shown next to the bounding box:

Setup & requirements

By default, Metavision Spatter Tracking looks for objects of size between 10x10 an 300x300 pixels.

Use the command line options --min-size and --max-size to adapt the sample to your scene.

How to start

You can directly execute pre-compiled binary installed with Metavision SDK or compile the source code as described in this tutorial.

To start the sample based on recorded data, provide the full path to a RAW or HDF5 event file (here, we use a file from our Sample Recordings):

Linux

./metavision_spatter_tracking -i sparklers.raw

Windows

metavision_spatter_tracking.exe -i sparklers.raw

In sparklers.raw from Sample Recordings, the speed of sparkles is very high and the sparkles are very tiny. To be able to track the sparkles in this particular file, the tracking parameters should be tuned, for example:

Linux

./metavision_spatter_tracking -i sparklers.raw --processing-accumulation-time 50 --cell-width 4 --cell-height 4 --activation-threshold 2

Windows

metavision_spatter_tracking.exe -i sparklers.raw --processing-accumulation-time 50 --cell-width 4 --cell-height 4 --activation-threshold 2

To start the sample based on the live stream from your camera, run:

Linux

./metavision_spatter_tracking

Windows

metavision_spatter_tracking.exe

To start the sample on live stream with some camera settings (biases, ROI, Anti-Flicker, STC etc.)

loaded from a JSON file, you can use

the command line option --input-camera-config (or -j):

Linux

./metavision_spatter_tracking -j path/to/my_settings.json

Windows

metavision_spatter_tracking.exe -j path\to\my_settings.json

To check for additional options:

Linux

./metavision_spatter_tracking -h

Windows

metavision_spatter_tracking.exe -h

Algorithm Overview

Spatter Tracking Algorithm

The tracking algorithm consumes CD events and produces tracking results (i.e.

Metavision::EventSpatterCluster).

Those tracking results contain the bounding boxes with unique IDs of tracked objects.

The tracking algorithm consists mainly of two parts. First, the input events are continuously accumulated in a user-defined grid during

the time slice. Then, at the end of the time slice, cells where the event count is high are marked as activated. Clusters are formed from connected active cells and a bounding box is defined around each of them.

Small and big clusters are filtered out depending on the user’s input for their desired size. Finally the newly detected clusters are matched with previously detected clusters, based on their center-to-center distance,

to update the position of the tracked Metavision::EventSpatterCluster.

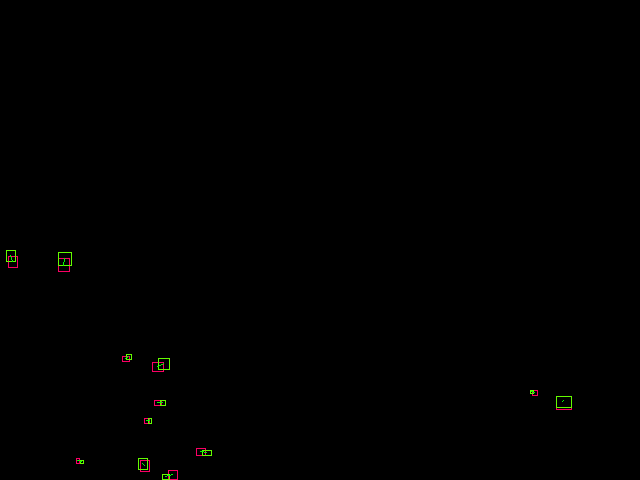

The algorithm’s steps are illustrated below:

Heatmap of events count during a time slice

Bounding box around detected clusters

Matching previous clusters (in red) to new detected ones (in green)

Code Overview

Pipeline

The sample implements the following pipeline:

Note

The pipeline also allows to apply a filter on the events using the algorithm

SpatioTemporalContrastAlgorithm

that can be enabled and configured using the --stc-threshold command line option.

Other software filters could be used like ActivityNoiseFilter

or TrailFilter.

Alternatively, to filter out events, you could enable some of the ESP blocks of your sensors.

Slicing and processing

The Metavision::EventBufferReslicerAlgorithm is used to produce events slices with the appropriate

duration. The slicer is configured by providing the callback to call when a slice has been completed.

In this case it corresponds to the callback that processes the spatter tracking results:

using ConditionStatus = Metavision::EventBufferReslicerAlgorithm::ConditionStatus;

using Condition = Metavision::EventBufferReslicerAlgorithm::Condition;

const auto tracking_cb = [this](ConditionStatus, Metavision::timestamp ts, std::size_t n) { tracker_callback(ts); };

const auto slicing_condition = Condition::make_n_us(accumulation_time_);

slicer_ = std::make_unique<Metavision::EventBufferReslicerAlgorithm>(tracking_cb, slicing_condition);

Events of the slice are directly processed on the fly by the slicer in the camera’s callback:

Metavision::EventsCDCallback camera_callback;

if (stc_threshold_ > 0) {

stc_filter_.reset(

new Metavision::SpatioTemporalContrastAlgorithm(sensor_width, sensor_height, stc_threshold_, false));

camera_callback = [this](const Metavision::EventCD *begin, const Metavision::EventCD *end) {

filtered_events_.clear();

stc_filter_->process_events(begin, end, std::back_inserter(filtered_events_));

// Frame generator must be called first

if (frame_generation_)

frame_generation_->process_events(filtered_events_.cbegin(), filtered_events_.cend());

slicer_->process_events(filtered_events_.cbegin(), filtered_events_.cend(),

[&](auto begin_it, auto end_it) { tracker_->process_events(begin_it, end_it); });

};

} else {

camera_callback = [this](const Metavision::EventCD *begin, const Metavision::EventCD *end) {

// Frame generator must be called first

if (frame_generation_)

frame_generation_->process_events(begin, end);

slicer_->process_events(begin, end,

[&](auto begin_it, auto end_it) { tracker_->process_events(begin_it, end_it); });

};

}

camera_->cd().add_callback(camera_callback);

If enabled, a filtering is applied before processing the events.

Spatter tracking results

When the end of a slice has been detected, we retrieve the results of the spatter tracking algorithm. Then, we generate an image where the bounding boxes and the IDs of the tracked objects are displayed on top of the events.

void Pipeline::tracker_callback(Metavision::timestamp ts) {

tracker_->get_results(ts, clusters_);

if (tracker_logger_)

tracker_logger_->log_output(ts, clusters_);

if (frame_generation_) {

frame_generation_->generate(ts, back_img_);

tracked_clusters_->update_trajectories(ts, clusters_);

Metavision::draw_tracking_results(ts, clusters_.cbegin(), clusters_.cend(), back_img_);

tracked_clusters_->draw(back_img_);

draw_no_track_zones(back_img_);

if (video_writer_)

video_writer_->write_frame(ts, back_img_);

if (window_)

window_->show(back_img_);

}

if (timer_)

timer_->update_timer(ts);

}

The Metavision::OnDemandFrameGenerationAlgorithm class allows us to buffer input events

(i.e. Metavision::OnDemandFrameGenerationAlgorithm::process_events()) and generate an image on demand

(i.e. Metavision::OnDemandFrameGenerationAlgorithm::generate()). After the event image is generated,

the bounding boxes and the IDs are rendered using the Metavision::draw_tracking_results() function.

Display

Finally, the generated frame is displayed on the screen, the following image shows an example of output:

Going further

Estimate Speed of a tracked object

The sample uses the structure Metavision::EventSpatterCluster which produces tracking results containing the bounding boxes with unique IDs of tracked objects.

The coordinates of bounding boxes and the current track’s timestamp are also given by this structure.

Hence, for two different positions of a tracked object, it should be possible to estimate its speed for a given time slice.